The Next Access Review Won’t Be for People. It’ll Be for AI Agents

Every quarter, enterprises ask familiar questions about human access.

Does this user still need access to this application?

Does this admin still need elevated privileges?

Is this service account still active?

Are these permissions still aligned to the person’s role?

What access should be revoked before it becomes a problem?

We know this motion. It is not perfect, but the control is familiar. Identity governance, access reviews, privileged access management, role-based access, conditional access, logging, and recertification all exist because organizations eventually learned that unmanaged access becomes a risk.

Now we need to ask a harder question.

What happens when the next access review is not for a person?

What happens when it is for an AI agent?

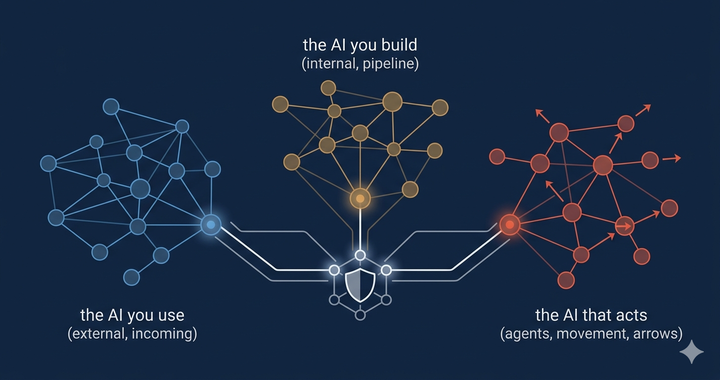

That is where enterprise security is headed. AI agents are beginning to read documents, query systems, summarize records, call APIs, trigger workflows, create tickets, write code, send messages, and make decisions across business processes. They are not just answering questions. They are taking actions.

And once a system can take action, access review becomes more than an identity governance task. It becomes an architectural requirement.

AI agents change the access problem

A chatbot responds.

An agent acts.

That distinction sounds small, but it changes the risk model. A traditional AI assistant may generate text, summarize a document, or answer a question. An agentic system can interpret a goal, decide which tool to use, retrieve data, call an external service, update a record, or trigger another workflow.

Joint guidance from ASD’s Australian Cyber Security Centre, CISA, NSA, the Canadian Centre for Cyber Security, New Zealand’s NCSC, and the UK NCSC describes agentic AI systems as systems that rely on an AI model, such as an LLM, to interpret the state of the world, make decisions, and take actions. These systems may include external tools, external data sources, memory, and planning workflows. The guidance also notes that agentic AI systems are intended to operate without continuous human intervention.

That means the security conversation cannot stop at the question of whether the model is safe.

The better question is: What authority have we delegated to the agent?

An AI agent with no tools can give a bad answer.

An AI agent with excessive permissions can create a bad outcome.

That is the difference enterprise security teams need to internalize.

The old access model assumes a human is in the loop

Most identity programs were designed around people.

A user logs in.

The user authenticates.

The user is mapped to a role.

The role grants access to applications and data.

Security tools monitor the session.

Periodic reviews determine whether access remains appropriate.

That model starts to break down when the actor is an AI agent.

An agent may act on behalf of a user. It may act through a service account. It may use an OAuth token. It may call APIs directly. It may access memory from previous interactions. It may retrieve information from a knowledge base. It may communicate with another agent. It may be embedded in a SaaS platform, run locally on an endpoint, or operate within a custom workflow.

In that environment, asking “which user logged in?” is not enough.

Security teams need to know:

What agent took the action?

Who owns that agent?

What identity did it use?

What user or workflow initiated the request?

What tools could it access?

What data did it retrieve?

What action did it take?

Was that action approved by policy?

Could the same action occur again without anyone noticing?

This is why AI agents need to be treated as identity-bearing entities, not just features inside an application.

The real issue is not just access control. It is action control.

Zero Trust has always been useful because it moves security away from implicit trust. NIST defines Zero Trust as a shift from static, network-based perimeters toward a focus on users, assets, and resources. It also states that authentication and authorization should occur before a session to an enterprise resource is established.

That remains the right foundation.

But agentic AI pushes Zero Trust into a new phase.

Traditional Zero Trust often focuses on whether an identity can reach a resource. Can this user access this application? Can this device connect to this service? Can this workload communicate with this API?

AI agents require one more layer.

What can this actor do after access is granted?

That is action control.

An agent may legitimately need to read a ticket, but not close it.

It may need to summarize an email, but not send one.

It may need to query customer data, but not export it.

It may need to recommend a firewall change, but not implement it.

It may need to generate code, but not commit to production.

It may need to identify a security incident, but not disable a control.

The access decision and the action decision are not the same thing.

If, over the last decade, Zero Trust was about verifying access, then Zero Trust for AI agents will be about verifying authority at the level of individual actions.

“Excessive agency” is the risk hiding in plain sight

OWASP describes Excessive Agency as a vulnerability where damaging actions can be performed because an LLM-based system has too much functionality, too many permissions, or too much autonomy.

That is exactly the issue enterprises need to address.

The problem is not that agents are useful. They are useful.

The problem is that usefulness often leads to the expansion of permissions before governance catches up.

A team starts with an agent who summarizes support tickets. Then it gives the agent access to customer records. Then it lets the agent draft responses. Then it connects the agent to workflow automation. Then someone adds the ability to update account fields. Then another team integrates it with billing, notifications, or case escalation.

Each step may seem reasonable in isolation.

But over time, the agent becomes a privileged actor.

That is how scope creep happens. It is already a common problem with both human and service accounts. AI agents make it harder because they can combine data, tools, memory, and decision-making in ways that are less predictable than traditional automation.

The risk is not only that an attacker compromises an agent. The risk is also that the agent behaves exactly as designed, but the design gave it too much authority.

Enterprises need AI agent access reviews

This is where security teams need a new operating motion.

We already review user access.

We already review privileged accounts.

We already review service accounts.

We already review application permissions.

Now we need to review agent authority.

An AI agent access review should not be a generic checkbox that says “approved AI tool.” It should be a structured review of what the agent is, what it can do, and whether that authority still makes sense.

At a minimum, every agent access review should answer nine questions.

1. What is the agent’s approved purpose?

Every agent should have a defined business purpose.

Not “productivity.”

Not “automation.”

Not “AI assistant.”

Those are too broad.

The purpose should be specific enough to constrain behavior. For example:

Summarize support tickets for Tier 1 analysts.

Draft, but do not send customer response emails.

Recommend security policy changes, but do not deploy them.

Query internal knowledge bases for employee support.

Create incident summaries from approved telemetry sources.

A clear purpose becomes the basis for every access decision that follows.

If the agent’s permissions are broader than its purpose, the permissions are wrong.

2. Who owns the agent?

Every agent needs a business owner and a technical owner.

The business owner is accountable for why the agent exists.

The technical owner is accountable for how the agent operates.

The security team is accountable for policy, monitoring, and control expectations.

Without ownership, agents become the next generation of orphaned service accounts.

That cannot happen.

If no one can explain why the agent exists, what systems it touches, and what risk it introduces, it should not have enterprise access.

3. What identity does the agent use?

An agent should not hide behind a generic shared account.

It should have a distinct identity that can be monitored, governed, and revoked.

The joint agentic AI guidance recommends constructing each agent as a distinct principal with its own cryptographically anchored identity, maintaining a trusted registry, denying access to agents or keys not in that registry, and limiting permissions to the minimum scope required for approved tasks.

That is the right direction.

If an agent acts, the enterprise should be able to trace that action back to the specific agent, the initiating user or workflow, the tool used, the data accessed, and the policy decision that allowed it.

If the answer is “the action came from a shared API key,” that is not identity governance. That is a blind spot.

4. What tools can the agent call?

Tools are where agentic AI becomes operational.

A model that can only generate text has limited reach. A model that can call tools can affect real systems.

That means tool access needs to be reviewed as carefully as application access.

Security teams should know:

Which tools can the agent call?

Which APIs can it reach?

Can it read, write, delete, approve, or execute?

Are any tools open-ended, such as shell access or unrestricted web access?

Can the agent install plugins or connect to new tools?

Can it call another agent?

Can a tool return instructions back into the agent’s context?

The safest model is not “let the agent decide from everything available.”

The safest model is “give the agent only the tools needed for the approved task.”

5. What data can the agent access?

Agent access reviews need to include the data scope.

This is especially important because agents often work through natural language. Sensitive context may not look like a traditional file transfer. It may appear in a prompt, a retrieved document, a chat history, a summarized record, or memory accumulated over time.

Security teams should ask:

Can the agent access regulated data?

Can it access customer records?

Can it access employee data?

Can it access source code?

Can it access credentials, secrets, or configuration files?

Can it retain data in memory?

Can it send data to external systems?

Can it combine low-risk data sources into high-risk context?

The last question matters.

AI systems can create risk not only by accessing a single sensitive record but also by combining multiple pieces of information into a more sensitive whole.

That is why data governance for agents needs to account for the accumulation of context, not just individual data elements.

6. What level of autonomy is allowed?

Not every agent needs the same level of independence.

Some agents should only recommend.

Some can draft.

Some can retrieve.

Some can update low-risk records.

Some may eventually take limited actions without approval.

But autonomy should be graduated, intentional, and reversible.

The joint guidance recommends mechanisms for human control and oversight to ensure that systems approved for non-sensitive, low-risk tasks cannot autonomously progress to higher-risk activities. It also recommends human control points such as live monitoring, interruption during task execution, mandatory approval for decision steps, auditing, and reversibility.

That should become standard enterprise practice.

The question is not whether the agent can act.

The question is which actions require approval.

A practical model might look like this:

Low-risk actions: agent can complete automatically.

Moderate-risk actions: agent can complete with logging and post-action review.

High-risk actions: agent can recommend, but a human must approve.

Critical actions: agent cannot perform them at all.

This is how organizations avoid giving agents a straight path from suggestion to execution.

7. What monitoring exists?

If an agent takes action, the organization needs evidence.

Not just inputs and outputs. Not just whether the session occurred. Not just whether the API was called.

Security teams need enough telemetry to reconstruct what happened.

The joint guidance recommends monitoring all agent operations, including internal processes, not only inputs and outputs. It also recommends monitoring user prompts, tool calls, memory interactions, internal reasoning, decisions made, actions taken, identity changes, privilege changes, and anomalous behavior.

That is a higher bar than traditional application logging.

For agents, the audit trail should capture the chain of authority:

Who initiated the task?

Which agent handled it?

What context did it use?

What tools did it call?

What data did it retrieve?

What decision did it make?

What action did it take?

What policy allowed or denied the action?

Was a human approval required?

Was the action reversible?

If the organization cannot answer those questions, it does not have enough visibility.

8. How is access revoked?

Every agent needs a kill switch.

That does not mean shutting down the entire AI platform. It means security teams need a defined way to quickly reduce or revoke an agent’s authority.

Can the agent’s credentials be disabled?

Can its tool access be removed?

Can its memory be isolated?

Can its API access be blocked?

Can its autonomy level be reduced?

Can its actions be rolled back?

Can the system fail-safe when confidence drops?

The joint guidance recommends strict privilege management, just-in-time credentials for high-impact or privileged actions, fresh cryptographic proof before privileged calls, and continuous verification of identity and authorization at runtime through a centralized policy decision point.

That is not theoretical. It is the practical foundation for containing agent risk.

A compromised human account is bad.

A compromised service account is worse.

A compromised agent with broad access to tools, persistent memory, and weak monitoring may be worse still.

Revocation has to be built in before the incident.

9. Has the agent’s authority expanded over time?

The most important access review question may be the simplest:

Has this agent accumulated more authority than it was originally approved to have?

This is where agent access reviews become valuable.

The initial deployment may be low-risk. The problem is what happens later.

A new plugin gets added.

A new data source gets connected.

A new workflow is automated.

A new business unit adopts the agent.

A temporary permission becomes permanent.

A read-only integration becomes read-write.

A recommendation workflow becomes an approval workflow.

This is the familiar story of access creep, but with a more capable actor.

That is why agent access reviews should be recurring. They should happen whenever an agent gains a new tool, touches a new data class, expands to a new business process, receives write privileges, or moves from human-approved actions to autonomous execution.

Where SASE and Zero Trust fit

This does not mean enterprises need to throw away their existing security architecture.

It means they need to extend it.

SASE, SSE, CASB, ZTNA, IAM, PAM, API security, SIEM, SOAR, data governance, and cloud security controls all have a role to play. The goal is not to build a separate security stack for AI agents. The goal is to make the existing control plane agent-aware.

SASE and SSE can help enforce policy and visibility across users, SaaS, private applications, cloud services, and internet destinations. IAM and PAM can help govern identities and privileges. API security can help control tool invocation. SIEM and SOAR can help correlate agent behavior with broader enterprise telemetry. Data governance tools can help classify what agents are allowed to access or transmit.

But the architecture has to recognize a new kind of actor.

An AI agent is not just another user.

It is not just another application.

It is not just another service account.

It is a non-human identity with delegated authority and the ability to operate across systems.

That is the lens security teams need to bring into Zero Trust planning.

The first meeting security teams should schedule

If I were advising an enterprise starting from scratch, I would not begin with a massive AI governance program.

I would start with a focused working session.

Bring together security architecture, IAM, data governance, application owners, legal or compliance, and the teams already experimenting with agents.

Then ask one question:

Which AI agents in our environment can take action today?

Not which AI tools exist.

Not which copilots are enabled.

Not which models are approved.

Which agents can act?

From there, build the first inventory.

For each agent, document:

Owner

Purpose

Identity

Data access

Tool access

Autonomy level

Approval requirements

Logging coverage

Credential model

Revocation path

Business impact if misused

That inventory becomes the starting point for AI agent access reviews.

Not perfect governance. Not bureaucracy. Not another committee that slows everything down.

Just visibility, ownership, and control over the systems that can now act on the organization’s behalf.

The bottom line

AI agents will become part of the enterprise technology stack.

They will help teams move faster, reduce manual work, improve response times, and automate processes that previously required human effort. But they also introduce a new identity and access problem that most security programs have not yet fully addressed.

The security model cannot stop at “the user was authenticated.”

It has to ask:

What agent acted?

What authority did it use?

What action did it take?

Was that action allowed?

Was it monitored?

Could it be reversed?

Should that agent still have the same authority next quarter?

That is the next evolution of access governance.

The next access review will not be limited to employees, admins, contractors, and service accounts.

It will be for AI agents.

And the organizations that figure this out early will be in a much better position than the ones that wait until autonomous access becomes another unmanaged identity problem hiding in plain sight.

Comments ()