Extending Zero Trust into AI: An Architect's View

Something shifted at RSAC 2026 last week. The conversations that previously orbited around "how do we secure AI" moved toward something more specific and more urgent: how do we extend Zero Trust, the framework most enterprises have been building toward for the better part of a decade, to cover AI systems that don't behave like anything Zero Trust was originally designed to protect.

Microsoft published a formal Zero Trust for AI framework the week of the conference. Cisco announced a sweeping set of new controls specifically for the agentic AI ecosystem. The Cloud Security Alliance published its own governance specification. This is a lot of attention landing in a short window, and it reflects something real. The AI attack surface has expanded faster than most security architectures have adapted.

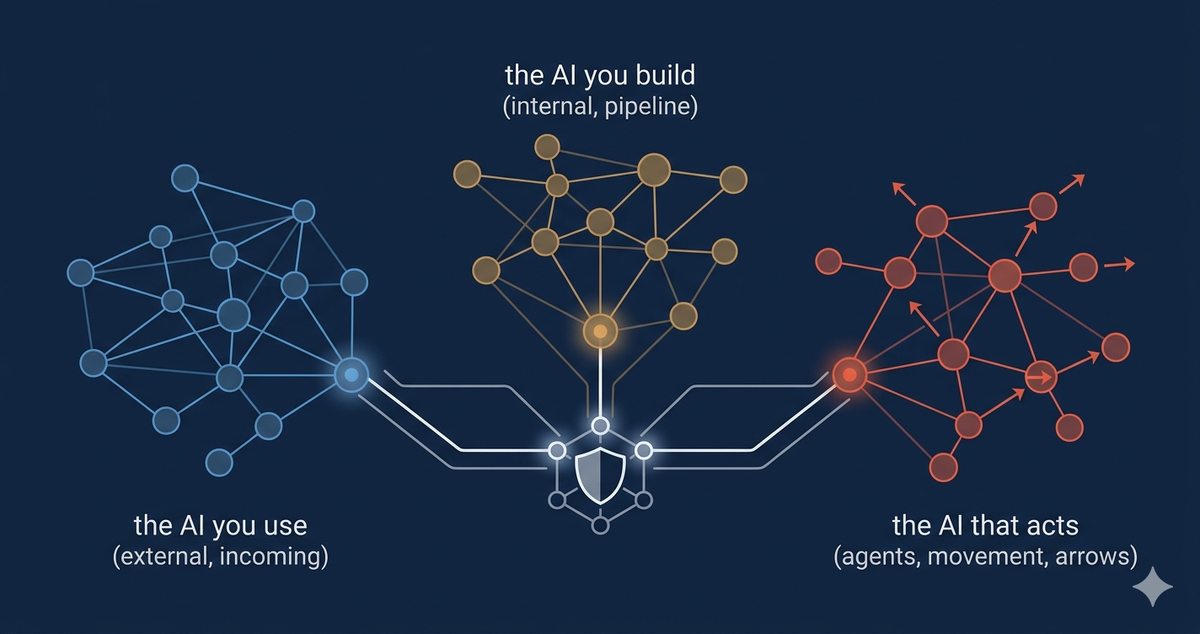

I've been thinking about this through a specific lens. When you look at how enterprises actually use AI today, there are three meaningfully different problems, each requiring Zero Trust to be extended in a different way. The AI that your employees use. The AI that your teams build. And the AI that acts on your behalf. Each of these sits in a different part of your environment, touches different assets, and creates risks that don't map cleanly onto the same controls.

The AI You Use: A Data Governance and Identity Problem

Most enterprises are somewhere in the middle of figuring out how to govern the AI tools their people are already using. SaaS AI products, third-party APIs, embedded copilots in productivity software. I wrote about this in October, and the core problem hasn't changed: your employees are sharing data with external AI systems through interfaces that your existing DLP tools weren't designed to inspect.

The Zero Trust framing here is straightforward in principle. Every AI service is a third-party system. It needs to be authenticated through your identity provider before anyone in your organization can reach it. Traffic to and from it should flow through your inspection plane, whether that's a CASB or a SASE architecture. Access should be governed by policy, tied to user identity and role, and logged.

What makes this harder in practice is that much of the data exposure occurs within the conversation itself. Traditional DLP can catch known patterns in discrete transfers: credentials, structured data, and content that matches a defined policy. What it struggles with is sensitive context that accumulates across a multi-turn session in natural language, rephrased, fragmented, or embedded in the flow of a conversation in ways that don't match a pattern a rule-based tool was trained to catch. That's a different inspection problem, and it's one most Zero Trust implementations weren't built to address.

The architectural response is to treat AI-aware inspection as a first-class capability within your enforcement plane, rather than an extension of existing DLP. That doesn't solve everything, and the tooling is still maturing, but it's the right direction.

The AI You Build: A Supply Chain and Workload Identity Problem

This is the surface area that gets the least attention in most security conversations, even though it carries some of the most significant risks for organizations building proprietary AI systems.

When your teams are training models on internal data, fine-tuning foundation models on proprietary content, or building inference pipelines that process sensitive workloads, you've introduced a class of systems that Zero Trust needs to treat as first-class workload identities. The training data, the model artifacts sitting in a registry, and the inference endpoint serving requests to internal applications. Each of these is an asset with access requirements that need to be governed.

The supply chain dimension is worth taking seriously for organizations that consume or build on top of open-source or third-party model artifacts. Security researchers discovered malicious models on Hugging Face in early 2025 that exploited Python serialization to execute arbitrary code when loaded. The model registry is an attack surface. The training pipeline is an attack surface. The dependencies your ML team pulls into the environment are an attack surface, just like any software supply chain. This isn't a concern for every enterprise running a managed AI service, but for teams building on top of external model artifacts, it deserves the same scrutiny you'd apply to any third-party code dependency.

Applying Zero Trust here means treating the ML pipeline as you would any other privileged workload. Least-privilege access to training data. Managed identities for automated jobs, not standing service accounts with broad permissions. Artifact signing for model checkpoints. Isolation for training environments that touch sensitive data. None of this is exotic, but it requires deliberately extending your Zero Trust architecture into a part of the environment that security teams often don't own or fully understand.

I'd also note that the access control problem inside ML pipelines is genuinely hard. Data scientists need exploratory access during development that looks nothing like the narrow, scoped access appropriate when the same pipeline runs in production. That transition point, from experimental to operational, is where permissions tend to linger and where the least-privilege principle most often gets quietly ignored.

The AI That Acts: A Non-Human Identity and Action Control Problem

This is where the architecture gets genuinely new. Agentic AI systems, software that doesn't just respond to queries but takes actions, invokes tools, reads and writes data, and makes sequential decisions in pursuit of a goal, introduce a class of entity that Zero Trust wasn't designed to govern.

A survey of over 900 executives and technical practitioners, published earlier this year by Gravitee, found that 88% of organizations reported confirmed or suspected AI agent security incidents in the last 12 months. The identity governance picture inside those organizations is telling: only 21.9% treat agents as independent, identity-bearing entities, and 45.6% still rely on shared API keys for agent-to-agent authentication. At the same time, 82% of executives feel confident their existing policies protect them from unauthorized agent actions. That gap between executive confidence and technical reality is where the real risk lives.

The core problem is that existing Zero Trust implementations were built around human identities and relatively predictable service accounts. An AI agent is neither. It accumulates permissions at runtime. It makes decisions about which tools to invoke and what data to pass to those tools. Depending on how the agent is designed and what inputs it processes, it may be susceptible to manipulation through the content it reads, not just through its credentials. And it may operate across multiple systems in a single workflow, which means a poorly scoped agent creates a larger blast radius than a poorly scoped service account. Research published this week by Palo Alto's Unit 42 on multi-agent architectures found that chaining agents together compounds this: each hop in a multi-agent workflow introduces additional attack surfaces that single-agent security models don't account for.

The required shift, as several speakers framed it at RSAC, is from access control to action control. Zero Trust, as most enterprises have implemented it, governs whether an entity can reach a resource. Agentic AI requires governing what that entity can do once it gets there, at the level of individual actions, not just connection establishment.

In practical terms, that means treating every agent as a distinct identity with a registered owner, scoped permissions tied to a specific task, time-bound credentials rather than standing access, and audit trails that capture what the agent actually did. The MCP layer, the protocol increasingly used to connect agents to enterprise tools, needs to be treated as a policy enforcement point in its own right. I went deeper on the MCP security problem in a recent post, but the architectural principle is the same: if an agent can call a tool, that call should be authenticated, authorized, and logged just like any other privileged operation.

The agentic surface also reintroduces the shadow IT problem in a new form. Employees are deploying agents with access to corporate systems in ways that bypass identity and access management entirely. The governance gap isn't just about the agents your organization has sanctioned. It's about agents your people are running without your knowledge.

What This Means for the Architecture

Zero Trust was conceived as a response to the failure of perimeter security. The network boundary dissolved, identities became the new perimeter, and "never trust, always verify" became the organizing principle. What AI does is expand the set of things that need to be verified, in ways that don't fit neatly into the human-centric models most Zero Trust implementations were built on.

The architectural work here isn't starting from scratch. The principles that have guided Zero Trust adoption, identity as the control plane, least privilege, continuous verification, and assume breach, are the right principles. They just need to be applied to a broader set of entities and actions than most existing implementations cover.

The AI you use requires your inspection plane to understand conversational data, not just file transfers. The AI you build requires your workload identity and supply chain controls to extend into ML pipelines and model registries. The AI that acts requires your identity governance to cover non-human entities with dynamic, task-scoped permissions and your policy enforcement to operate at the action level, not just the access level.

None of these are complete solutions. The tooling for some of this is still being built, and the frameworks coming out of RSAC, from Microsoft, Cisco, and the Cloud Security Alliance, represent useful starting points rather than finished answers. But the direction is clear, and the enterprises that treat AI as a reason to extend and mature their Zero Trust architecture, rather than a separate security problem to be addressed with a separate set of tools, are going to be better positioned as this gets more complex before it gets simpler.

Comments ()