The Agentic AI Security Crisis Is Here, and OpenClaw Proved It.

OpenClaw became the most-starred repository in GitHub history in about 60 days. By early March, it had passed 250,000 stars, overtaking React, a project that took over a decade to reach that number. If you work in enterprise security or architecture and haven't heard of it yet, you will soon, because your employees are probably already running it.

What happened next is the clearest illustration I've seen of a problem I've been writing about: agentic AI tools are arriving in enterprise environments faster than anyone can govern them, and the security implications are no longer theoretical.

What OpenClaw Actually Does

OpenClaw is an open-source AI agent that runs locally and connects to large language models. Unlike a chatbot that answers questions, OpenClaw acts. It executes shell commands, reads and writes files, browses the web, sends emails, manages calendars, and automates workflows across Slack, WhatsApp, Telegram, and dozens of other platforms. It stays on around the clock. People were buying dedicated Mac minis just to run it 24/7. Peter Steinberger, the developer behind it, was hired by OpenAI within weeks of launch.

The security findings were staggering. A security audit identified 512 vulnerabilities across the platform, 8 of which were critical. SecurityScorecard found over 135,000 OpenClaw instances exposed to the public internet across 82 countries. One critical flaw enabled a full remote takeover via a single malicious link, with the attack completing in milliseconds.

Then there was the supply chain problem. OpenClaw's plugin marketplace, ClawHub, was found to have roughly one in five packages compromised with malicious code, including tools that installed keyloggers and credential-stealing malware while appearing completely legitimate. China restricted state agencies from running it. Meta reportedly threatened employees who installed it. Microsoft published guidance explicitly calling it "untrusted code execution with persistent credentials."

This Is the Agentic Boundary Problem in Real Time

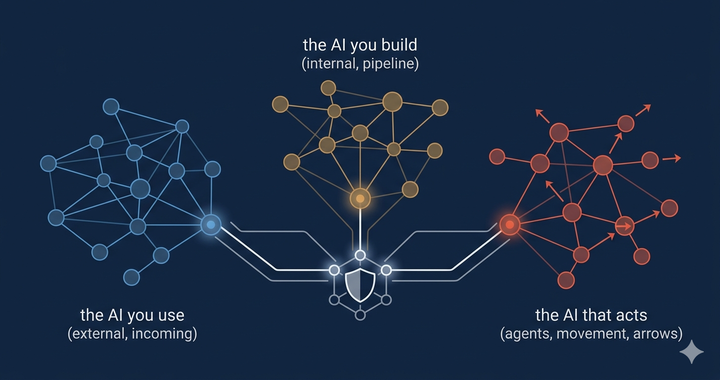

In my post on AI deployment taxonomies, I described how agentic AI constantly crosses the boundaries among Embedded, Integrated, and Private deployments, and how most enterprises have zero visibility into those crossings. OpenClaw proved that observation in the most uncomfortable way possible.

Here's how it plays out. An employee installs OpenClaw on their machine and connects it to work Slack, Google Workspace, and email. Now an autonomous AI agent has OAuth tokens granting access to corporate systems, persistent memory accumulating sensitive data across sessions, and the ability to execute commands with the user's full permissions. Traditional security tooling is largely blind to this. Endpoint security sees processes running, but can't interpret agent behavior. Network monitoring sees API calls but can't distinguish legitimate automation from a compromised agent. Identity systems see OAuth grants but don't flag AI agent connections as unusual.

Trend Micro called this "shadow AI with elevated privileges." Some security vendors estimated that roughly 22% of monitored customer environments had unauthorized OpenClaw usage by early 2026. This adoption happened in weeks. Not months. Far faster than any governance process could respond.

The gap isn't that enterprises don't understand AI risk in the abstract. It's that the tools are being deployed by individual employees at a pace that outstrips any policy. And unlike a SaaS tool that IT can discover through procurement records, an open-source agent running locally is effectively invisible until something goes wrong.

The Plumbing Problem: MCP

Understanding why OpenClaw's risk extends beyond a single tool requires an understanding of the Model Context Protocol, or MCP. It's the protocol that connects AI agents to external tools, databases, APIs, and services. Anthropic developed it, and it has rapidly become the standard connection layer for agentic AI. Amazon, Microsoft, Google, and OpenAI all support it.

The critical difference between MCP and traditional APIs is that a large language model sits in the middle as the decision maker. It decides which tools to call and what data to pass. That decision maker is probabilistic, not deterministic. It can be manipulated through prompt injection. It can be tricked into exfiltrating data through seemingly legitimate operations. And it processes its entire context as authoritative input, including any malicious instructions embedded in the data it reads.

The Coalition for Secure AI (CoSAI), which includes Google, IBM, Microsoft, and others, published an MCP security framework in January, identifying nearly 40 distinct threats across 12 categories. Real-world exploits are already surfacing: researchers found remote code execution vulnerabilities in Anthropic's own official Git MCP server, a fake npm package was caught silently copying outbound emails to an attacker, and a GitHub MCP server was hijacked to exfiltrate data from private repositories. In every case, the attack targeted the connection layer between the model and enterprise systems, not the model itself.

For architects who have been focused on securing AI models, this is a significant blind spot. The model might be perfectly aligned. But if the plumbing connecting it to your environment is compromised, that alignment doesn't help.

What This Means for Zero Trust

If you've been building a Zero Trust architecture, the agentic AI challenge maps directly onto principles you already understand. The problem is that most Zero Trust implementations were designed for human identities and traditional service accounts, not for autonomous agents that accumulate permissions, maintain persistent memory, and independently decide which tools to invoke.

Every AI agent interacting with enterprise systems needs to be treated as an identity with verifiable credentials, scoped permissions, audit trails, and lifecycle management. The Zero Trust principles apply: least privilege, continuous verification, just-in-time access, and the assumption that any agent could be compromised. The challenge is that existing IAM tools weren't built for ephemeral agents, MCP-layer authorization, or traceability across agentic workflows.

The industry is starting to respond. The CoSAI framework provides architectural guidance. SASE platforms are beginning to treat AI agent traffic as a first-class security concern. Cisco announced AI-aware SASE capabilities in February. Cato Networks launched native AI security integrated into its SASE platform this week. These are early signals that the enforcement layer is evolving, but HiddenLayer's 2026 AI Threat Landscape Report, also released this week, found that autonomous agents already account for more than one in eight reported AI breaches, and Cisco's data shows only 29% of organizations feel prepared to secure agentic deployments.

Where Architects Should Focus Right Now

I wrote in my taxonomy post that architects should map their AI deployments across the Embedded, Integrated, and Private spectrum. OpenClaw makes that advice more urgent. But it also adds a dimension: you now need to account for AI that your employees are deploying without your knowledge.

Discovery comes first. You need to know whether OpenClaw or similar agentic tools are running in your environment. That means scanning endpoints, monitoring for MCP connections and unusual API patterns, and auditing OAuth grants for AI agent connections to corporate platforms.

Then comes agent identity governance. Any AI agent that touches enterprise systems needs the same identity rigor as any other privileged entity: provisioning, scoping, monitoring, and credential retirement.

And the MCP layer requires specific attention: input validation at trust boundaries, least-privilege access to tools, human approval for sensitive operations, and full logging for audit purposes. The CoSAI white paper is a practical starting point.

OpenClaw has made one thing very clear. The gap between the speed of agentic AI deployment and enterprise security readiness is the most pressing architectural problem in the industry right now. The organizations that recognized this pattern early and started building agent-aware security architectures will navigate it. The ones waiting for perfect governance frameworks before acting may find that their employees have already made the decision for them.

Comments ()