Zero Trust AI Security: Securing the AI You Use and the AI You Build

Key Takeaways

- Zero trust AI security requires distinct approaches for AI systems that enterprises consume versus AI systems they develop internally

- Traditional perimeter-based security measures fail to protect distributed AI workloads across multi-cloud environments and third-party services

- Identity-centric controls for AI models, data pipelines, and inference endpoints are becoming as critical as user identity management

- Policy-based trust frameworks enable secure AI deployment while maintaining governance over model behavior and sensitive data access

- Enterprises integrating AI security into existing zero trust frameworks gain a competitive advantage through faster, safer AI deployment

The Convergence Crisis: When AI Meets Zero Trust

Every week, I see another enterprise struggling with the same challenge: they’ve embraced AI innovation at breakneck speed, but their security posture hasn’t kept pace. The statistics tell a stark story—73% of enterprises now use AI systems. Yet, only 24% have adequate security controls in place to protect against AI security risks, according to Wiz's 2025 AI Security Readiness report.

This gap isn’t just about delayed implementation. It represents a fundamental mismatch between how we’ve traditionally secured enterprise assets and how AI technologies actually operate. Traditional security tools built for static, perimeter-based environments cannot handle the dynamic, distributed nature of modern AI systems.

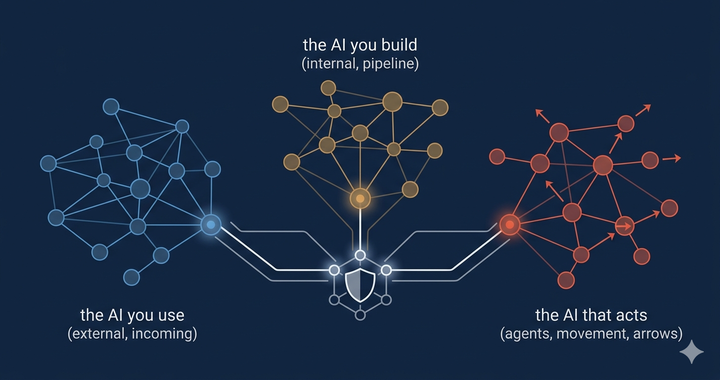

The challenge becomes even more complex when we consider that enterprises face two distinct AI security challenges: securing the AI they consume from third parties and securing the AI they build internally. Each requires different security measures, different access controls, and different approaches to continuous monitoring.

This is where zero trust architecture becomes not just helpful but essential. Zero trust’s core principle of “never trust, always verify” aligns perfectly with AI’s distributed, API-driven, and constantly evolving nature. But implementing zero trust AI security requires understanding both the unique attack surfaces that AI creates and the specific controls needed to mitigate AI security risks.

Securing AI Systems Enterprises Use: The Consumer Side

When enterprises consume AI services—whether through OpenAI’s GPT models, AWS Bedrock, Azure AI, or countless specialized AI tools—they’re essentially extending their attack surface into third-party environments they don’t control. This creates immediate concerns about sensitive data exposure and unauthorized access.

The shadow AI problem represents one of the most pressing AI security challenges today. Employees regularly use unauthorized AI tools with corporate data, often without understanding the security implications. A recent survey found that 78% of knowledge workers admit to using AI tools at work, but only 32% of their organizations have established policies for AI applications.

Here’s how zero trust principles apply to consumed AI services:

Treat AI Services Like Any Cloud Resource — But Recognize Their Unique Blind Spots

Implement strict access controls for all AI services through your existing CASB or SASE architecture, requiring users to authenticate via your enterprise identity provider before accessing any AI tools. All AI-related traffic should still pass through monitored channels — but understand that traditional monitoring and DLP tools were never designed for conversational AI interactions.

Legacy DLP systems inspect fixed data fields, file transfers, and known content patterns. They struggle to interpret dynamic, context-driven exchanges in AI chat sessions. Sensitive data can leak subtly through prompts, follow-up questions, or model-generated summaries — often in ways that bypass keyword-based detection.

This is where emerging AI-native security platforms come in. Solutions like AIM Security, Wiz, and similar technologies provide semantic understanding of AI interactions. They analyze conversations, intent, and data lineage rather than relying on static rules. These platforms can detect when confidential business data, source code, or regulated information is being shared with external models — even when it’s rephrased or embedded in natural language.

In short, enterprises should still apply Zero Trust network principles — authentication, least privilege, and traffic inspection — but complement them with AI-aware visibility and policy enforcement. Without this capability, organizations risk believing their traditional DLP or CASB stack provides coverage when, in reality, the most sensitive exposures occur within AI conversations that legacy tools can’t see.

Network Segmentation for AI Traffic — and the Limits of Traditional Isolation

Create dedicated network segments for AI service communication, just as you would for any high-value cloud workload. Segmentation still matters — it limits lateral movement and gives security teams control points for inspection. However, traditional segmentation by itself can’t fully protect AI interactions.

AI systems don’t exchange simple data packets or database queries; they exchange context-rich conversations. Sensitive details can move through model prompts, multi-turn chats, or API payloads that look benign to legacy firewalls and gateways. Even tightly segmented networks can’t detect when an employee is pasting confidential code snippets, customer records, or regulated text into an external AI interface.

Modern AI-aware segmentation must combine identity, context, and content awareness.

Platforms such as AIM Security, Wiz, and other AI-native security tools extend visibility into these flows by classifying data semantically — recognizing intent and meaning rather than just file type or header. They integrate with CASB and SASE layers to dynamically inspect AI traffic, correlating conversations with user identity, model endpoints, and risk scores.

The goal is to evolve from simple network isolation to policy-driven micro-segmentation that continuously evaluates who is communicating with which model, what data is being exchanged, and why. That’s how Zero Trust principles translate effectively into the AI era — through intelligent segmentation that understands language, not just packets.

Continuous Monitoring and Logging

Log every AI service interaction, including prompts, responses, and administrative actions. This creates an audit trail, which is essential for compliance, and helps identify potential data leaks or policy violations. Your SIEM should correlate AI service logs with other security events to detect coordinated attacks.

Securing AI Systems Enterprises Build: The Developer Side

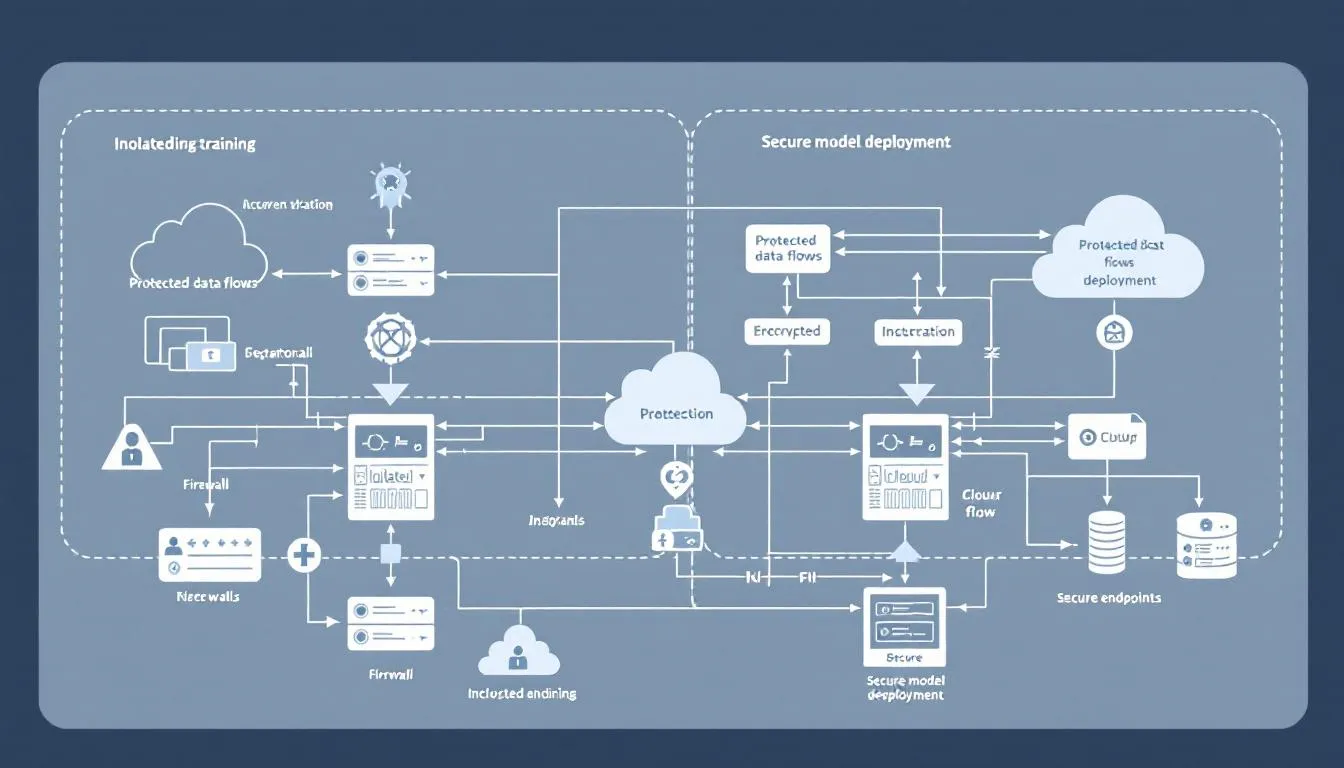

Building custom AI systems introduces an entirely different set of security challenges. From protecting training data to securing model deployment pipelines, enterprises must secure the entire AI lifecycle against sophisticated threats like data poisoning and model theft.

Securing the AI Development Lifecycle

The journey from raw data to a deployed AI model creates multiple opportunities for security breaches. Each stage requires specific security controls:

Data Ingestion and Preparation: Implement validation pipelines that verify the integrity and source of training data. Use cryptographic hashing to detect unauthorized modifications and maintain strict access controls over data repositories.

Model Training and Development: Isolate training environments using microsegmentation principles. Only authorized data scientists and ML engineers should access training infrastructure, and all model development should occur in monitored, controlled environments.

Model Deployment and Serving: Treat AI models as critical assets, with the same protections as sensitive applications. Implement container security for AI workloads, secure API endpoints for model inference, and maintain version control with cryptographic signatures.

Model Versioning and Provenance Tracking

Every AI model should have a lineage record: what data was used for training, who performed the training, when it occurred, and what testing was completed. This provenance information becomes a security control, helping detect compromised models and enabling rapid rollback when issues arise.

Protecting Against Data Poisoning

Data poisoning attacks aim to corrupt an AI model's behavior by injecting malicious examples into its training dataset. Implement automated detection for unusual data access patterns, validate data sources before ingestion, and maintain baseline behavioral profiles for your AI models to detect drift that might indicate compromise.

Zero Trust Principles Applied to AI Architecture

Zero trust AI security extends traditional zero trust principles to encompass the unique characteristics of AI systems. This means applying “never trust, always verify” not just to users and devices, but to AI models, datasets, and autonomous agents.

Least Privilege for AI Workloads

Every AI system, training pipeline, and model inference endpoint should operate with the minimum permissions necessary. A model serving predictions should not have access to training data repositories. Development environments should not connect to production data sources. This principle prevents lateral movement if attackers compromise any single component.

Assume Breach for AI Systems

Design your AI security architecture assuming attackers will compromise at least one component of your AI ecosystem. This means:

- Isolating AI workloads from other enterprise systems

- Implementing robust protection for sensitive data even within AI environments

- Planning incident response procedures specific to AI security incidents

- Regular security audits focused on AI-specific vulnerabilities

Continuous Verification of Model Behavior

Traditional applications behave predictably, but AI models can drift over time or be subtly compromised through adversarial inputs. Implement continuous monitoring to establish baseline behavioral patterns for your AI models and alert on deviations that might indicate compromise or manipulation.

Micro-segmentation for AI Infrastructure

Break your AI infrastructure into small, isolated segments. Training environments, development systems, testing platforms, and production inference endpoints should each operate in separate network segments with carefully controlled communication paths.

Identity for AI: Beyond Human Users

Traditional identity management focuses on human users and devices, but AI environments introduce new entities that need identity: AI models, datasets, automated agents, and service-to-service communications within AI pipelines.

Establishing Identity for AI Models

Every AI model should have a cryptographically verifiable identity that includes its training provenance, version information, and authorized use cases. This enables policy enforcement based on model characteristics—for example, models trained on customer data might be restricted from certain deployment environments.

Service-to-Service Authentication in AI Pipelines

MLOps workflows involve complex interactions between data processing services, training orchestrators, model registries, and inference endpoints. Each service should authenticate using certificates or tokens with limited scope and lifespan, preventing unauthorized access even if credentials are compromised.

Policy-Based Trust for AI Agents

As AI agents become more autonomous, they need their own trust policies. An AI agent handling customer service queries should have different access rights than one analyzing internal financial data. These policies should be dynamic, adjusting based on context, risk assessment, and current threat levels.

Certificate Management for AI Assets

Implement certificate-based authentication for AI models and services. Models should be cryptographically signed during training and validation, with signatures verified before deployment. This prevents deployment of tampered or unauthorized models.

Practical Implementation Framework

Implementing zero trust AI security requires a phased approach that builds on existing security capabilities while addressing AI-specific risks.

Phase 1: Discovery and Inventory

Begin by cataloging all AI assets in your environment:

- Third-party AI services and API integrations

- Internal AI models and applications

- Data sources used for AI training and inference

- Users and services with AI system access

- Shadow AI usage across the organization

Create a comprehensive inventory that includes risk assessments for each AI asset. High-risk items—those that process customer data or support critical business functions—should receive priority attention.

Phase 2: Policy Development

Develop AI-specific policies that extend your existing zero trust framework:

- Acceptable use policies for AI tools and services

- Data classification schemes that account for AI processing

- Access control policies for AI development and deployment

- Incident response procedures for AI security threats

Engage stakeholders across security, legal, compliance, and business units to ensure policies address both security risks and business requirements.

Phase 3: Technical Controls Deployment

Implement the technical security controls identified in your policy framework:

- Deploy access controls for AI services and development environments

- Implement monitoring and logging for AI-related activities

- Establish secure AI development pipelines with appropriate gates

- Configure data protection controls for AI workloads

Focus on integrating these controls with existing security tools rather than deploying standalone solutions.

Phase 4: Continuous Monitoring and Adaptation

Establish ongoing processes for monitoring AI security posture and adapting to new threats:

- Regular security audits focused on AI systems

- Threat intelligence gathering for AI-specific attack vectors

- Policy updates based on new AI technologies and use cases

- Performance monitoring to ensure security controls don’t impede AI innovation

Integrating AI Security into Existing Zero Trust Frameworks (Modernized)

Most enterprises already have Zero Trust initiatives in place. The next step is to extend identity, inspection, and policy from users and devices to the full spectrum of AI activity — models, prompts, agents, and retrieval pipelines. The goal isn’t to build a parallel security stack but to make existing Zero Trust frameworks AI-aware so they can govern conversations, intent, and context, not just files or URLs.

Extending SASE Architectures

SASE remains the enforcement plane for identity, inspection, and egress control. What’s changed is what needs to be inspected.

Modern SASE deployments should:

- Discover and govern AI usage, not just entire applications.

- Identify generative AI services and embedded copilots across SaaS platforms.

- Apply granular policies to prompts and responses based on user identity, data sensitivity, and business context.

- Use LLM-aware data classification to detect sensitive content even when it’s paraphrased or embedded in natural language.

Traditional data-inspection rules only see structured fields; AI security requires conversation- and intent-level visibility.

AI-Aware CASB Policies

Legacy CASB policies were built for file uploads and keyword matches. AI usage demands semantic governance that understands the flow of context, not just content.

Enterprises should:

- Inspect and log AI prompts and responses to maintain audit trails and data lineage.

- Detect “shadow AI” tools and classify them by risk level, applying allow/coach/block policies.

- Integrate AI-native detection platforms that understand when regulated or confidential data appears in conversational flows.

The result is a CASB layer that governs not only SaaS access but also how sensitive data interacts with AI systems.

Identity Provider Integration

Identity must now cover more than human users. Extend your existing identity provider to assign verifiable identities to AI components — including models, datasets, pipelines, and agents.

Best practices include:

- Implementing service-to-service authentication using scoped certificates or tokens.

- Tracking model identity, version, provenance, and approved use cases.

- Enforcing authorization policies based on the role and trust level of each model or agent.

This ensures every entity participating in an AI workflow — human or machine — can be authenticated, verified, and governed.

SIEM/SOAR Integration

Treat AI telemetry as a first-class signal within your SOC.

Integrate AI activity into your existing SIEM and SOAR tools by:

- Forwarding logs from model endpoints, prompt/response gateways, and policy enforcement events.

- Correlating AI behavior with user sessions and other security signals.

- Automating containment actions such as isolating compromised models, revoking tokens, or rolling back model versions.

AI systems evolve rapidly; your monitoring must keep pace to maintain situational awareness and response readiness.

Add AI Posture and Risk Management

Finally, extend your Zero Trust posture assessments to include AI assets.

AI posture management tools should:

- Inventory all models, datasets, and connectors across environments.

- Assess their data sensitivity, compliance posture, and deployment context.

- Continuously evaluate risk and apply policy-driven guardrails to enforce acceptable use.

This creates a unified control plane where AI workloads are monitored, measured, and managed with the same rigor as any other critical enterprise system.

The Future of Zero Trust AI Security

Zero Trust and AI are converging faster than most organizations realize. What began as an exercise in network hardening has evolved into a strategy for governing everything with agency — humans, machines, and now, intelligent systems that reason and act autonomously.

The next phase of Zero Trust isn’t about more segmentation or stricter access control. It’s about dynamic trust for dynamic intelligence — architectures capable of continuously verifying not only who and what is acting, but why and under what policy those actions occur.

Emerging Standards and Frameworks

Regulatory momentum is accelerating. Frameworks such as the NIST AI Risk Management Framework and ISO 42001 for AI management systems are rapidly becoming benchmarks for responsible deployment. These standards complement Zero Trust by introducing structured ways to evaluate AI-specific risks — bias, drift, misuse, and model integrity — as part of a continuous assurance loop.

Over the next few years, enterprises will begin blending these frameworks directly into their Zero Trust policies, ensuring that AI systems meet the same verification, documentation, and accountability requirements as any other critical workload.

AI Identity and Provenance

The most transformative development in Zero Trust AI will be identity for models and agents.

As enterprises deploy AI internally, each model and agent will need a unique, verifiable identity linked to its provenance, training data, and approved use cases.

This enables policy enforcement not just by user or network context, but by model trust level — ensuring that only validated models can access sensitive data, invoke specific APIs, or make high-impact decisions.

Over time, this will evolve into a model trust registry, where organizations maintain digital certificates for AI systems, ensuring traceability and revocation capabilities similar to those of modern PKI for users and devices.

Autonomous Security Responses

Security operations are shifting toward autonomous containment.

AI-driven SOC platforms are beginning to detect, isolate, and remediate threats in real time — including those originating from compromised models or malicious prompt activity.

Zero Trust architectures will increasingly include self-healing mechanisms that can:

- Quarantine a model showing signs of drift or data poisoning.

- Automatically revoke access tokens for affected pipelines.

- Restore known-good versions of compromised AI assets.

These closed-loop responses reduce dwell time from hours to seconds, aligning perfectly with the Zero Trust principle of continuous verification and least privilege.

Converging AI Posture and Cyber Posture

Over the next three years, AI security posture will no longer be a standalone category.

Expect AI posture management and cyber posture management to converge into unified control planes, with identity, data, and model integrity sharing a single trust graph.

Organizations will assess and enforce risk across people, workloads, and AI components in a single, continuous system of record—a single “trust fabric” for everything intelligent or connected.

Industry Consolidation and Platform Integration

The explosion of specialized AI security startups will eventually give way to platform consolidation. Established cybersecurity providers are already embedding AI posture, model governance, and prompt inspection into their Zero Trust suites.

For enterprises, this means the future of AI security won’t live in a new tool — it will be natively woven into existing Zero Trust platforms, just as SASE converged network and cloud security a decade ago.

From Control to Confidence

Ultimately, the future of Zero Trust AI Security is about confidence — knowing that innovation can move at full speed without outrunning safety.

As enterprises transition from experimentation to full-scale AI adoption, success will depend on security architectures that are not just resilient, but adaptive — continuously verifying, learning, and refining in parallel with the AI systems they protect.

The trends shaping Zero Trust AI security aren’t theoretical — they’re already reshaping how enterprises design, deploy, and defend their AI ecosystems. The challenge now is operationalizing these principles. Building a modern Zero Trust foundation for AI doesn’t require starting over; it requires extending what you already have — identity, network, and data controls — to include the AI models and pipelines driving your business.

In the next section, we’ll move from strategy to execution with a phased action plan that any organization can adapt. Whether you’re just discovering shadow AI use across your workforce or already running internal model deployments, these steps will help you strengthen governance, enforce visibility, and build measurable trust into every stage of your AI adoption journey.

Your Zero Trust AI Security Action Plan

Building Zero Trust for AI isn’t a one-time project — it’s a continuous evolution of identity, visibility, and verification across the entire AI lifecycle. The objective is to make every model, dataset, and interaction discoverable and governable, no matter where they operate.

Establish Complete Visibility

Start by making AI activity visible.

- Identify every AI system, API, and service in use — whether officially approved or shadow IT.

- Map where data moves between people, applications, and models.

- Include embedded AI features inside existing SaaS platforms, not just standalone tools.

Visibility is the foundation of Zero Trust for AI. You can’t secure what you can’t see.

Define Policy and Purpose

Clarify how AI is allowed to operate inside your enterprise.

- Create acceptable-use and data-handling policies specific to AI.

- Define which data classifications are permitted for model training, inference, or prompt interactions.

Document approved providers and use cases to guide adoption instead of blocking it.

Effective policy doesn’t restrict innovation — it channels it safely.

Extend Controls You Already Have

Leverage existing Zero Trust investments instead of building new silos.

- Integrate AI awareness into your SASE and CASB platforms to monitor and enforce access controls.

- Extend identity providers to cover models, agents, and pipelines, assigning verifiable credentials to every non-human entity.

- Feed AI telemetry into SIEM/SOAR tools to correlate behavior, detect drift, and trigger automated responses.

Zero Trust works when the same verification logic applies across users, workloads, and now, AI systems.

Build Secure Development and Deployment Pipelines

Treat every model like production software.

- Protect the integrity of training data with validation, hashing, and access controls.

- Sign model artifacts and enforce provenance checks before deployment.

- Segment environments so training, testing, and inference remain isolated.

These controls turn MLOps into secure MLOps, ensuring models can be trusted before and after deployment.

Govern, Monitor, and Adapt

Zero Trust is continuous.

- Implement AI posture management to maintain a live inventory of models, data sources, and connectors.

- Continuously evaluate risk based on usage, data sensitivity, and business impact.

- Automate response workflows for model compromise, policy violations, or data leakage.

- Measure effectiveness through security KPIs such as model coverage, identity compliance, and incident reduction.

Governance transforms AI security from reactive defense into proactive assurance.

Strengthen Culture and Capability

Technology alone doesn’t sustain Zero Trust.

- Train teams on AI-specific threats — from prompt injection to model inversion.

- Establish cross-functional governance that includes security, data science, compliance, and legal.

- Promote a mindset of secure innovation where every AI initiative starts with security built in.

Summary

The future of secure AI isn’t about adding new tools — it’s about expanding trust to new entities.

When enterprises extend Zero Trust to include the AI they use and the AI they build, they enable responsible innovation at scale.

Visibility reveals risk.

Policy defines boundaries.

Identity enforces control.

Governance builds confidence.

That’s the path to operating AI safely, sustainably, and with trust at its core.

The AI revolution isn’t slowing down, but that doesn’t mean you have to sacrifice security for innovation. By extending zero trust principles to cover both the AI you consume and the AI you build, you can enable secure AI adoption that drives business value while protecting against emerging cyber threats.

Start with what you have. Build incrementally. Measure continuously. The future belongs to organizations that can innovate with AI while maintaining robust protection for their most sensitive data and critical systems.

Frequently Asked Questions (Modernized)

How does Zero Trust AI security differ from traditional application security?

Traditional application security protects static, predictable systems— such as web apps, APIs, and endpoints.

AI systems behave differently. They learn, adapt, and evolve, which means their attack surface changes continuously.

Zero Trust AI security extends traditional controls by adding model integrity verification, training data protection, and behavioral monitoring. Instead of securing only code and infrastructure, it secures the decision-making process itself — ensuring every AI action can be verified, traced, and governed.

What specific tools or technologies are needed to implement Zero Trust for AI?

Most enterprises don’t need a separate toolset; they need to extend existing Zero Trust controls to cover AI.

Start with your identity provider, SASE, and CASB platforms, ensuring they can authenticate and monitor AI services and embedded copilots.

Then layer AI-native capabilities — such as prompt and response inspection, model provenance tracking, and AI posture management — to provide the visibility traditional tools can’t.

The priority isn’t new technology; it’s integrated governance across your existing stack.

How should organizations approach AI security in multi-cloud or hybrid environments?

Consistency is key.

Apply the same authentication, data-classification, and inspection policies across all environments — whether workloads run in AWS, Azure, or on-premises.

Centralize identity and policy enforcement so every model or service request passes through a unified trust fabric.

Use orchestration tools or cloud-native policy engines to keep configurations aligned, and extend your Zero Trust telemetry to capture AI activity wherever it occurs.

What are the primary compliance considerations for Zero Trust AI security?

AI introduces new obligations regarding data sovereignty, algorithmic accountability, and transparency in privacy.

Zero Trust supports compliance by enforcing who and what can access sensitive data, recording model behavior, and maintaining audit trails.

Align your AI policies with emerging standards like NIST AI RMF and ISO 42001, and map them to familiar regulatory requirements (GDPR, CCPA, HIPAA).

The goal is demonstrable control — being able to show regulators and stakeholders exactly how your AI operates and the safeguards in place.

How can organizations measure the effectiveness of their Zero Trust AI security program?

Measurement must blend technical performance with business impact.

Track metrics such as:

- Percentage of AI assets with verified identity and access policies

- Reduction in shadow AI usage

- Mean time to detect and contain AI-related incidents

- Policy compliance rates for model deployment and data access

Complement these with strategic indicators: faster AI deployment cycles without increased risk, and higher confidence from compliance and leadership teams.

Effectiveness is not only about reducing incidents — it’s about enabling secure, scalable AI innovation.

Where should enterprises start?

Begin where visibility is lowest.

Map your AI exposure, discover hidden tools, and connect those findings to your existing Zero Trust architecture.

From there, define policy, extend controls, and integrate AI-specific governance.

You don’t have to secure AI in one leap — you have to make sure every new step in adoption is trustworthy.

Closing Thoughts

AI is no longer an experiment living at the edge of the enterprise — it’s woven into everyday workflows, decisions, and products. The question isn’t whether organizations will adopt AI, but whether they can do so responsibly, transparently, and securely.

Zero Trust gives us the framework for that future.

It provides the discipline to verify every interaction, govern every model, and secure every data flow — without slowing innovation.

The next wave of security leadership won’t come from those who say “no” to AI, but from those who build trust into it from the start.

In this new era, success belongs to organizations that treat AI as part of the trust fabric — not an exception to it.

About the Author

Philip Walley is a cybersecurity strategist and product marketing leader specializing in Zero Trust architecture, Secure Access Service Edge (SASE), and AI security.

As Senior Product Marketing Manager at Cato Networks and founder of PhilipWalley.com, he helps enterprises bridge the gap between innovation and assurance by applying Zero Trust principles to emerging technologies like generative AI, machine learning, and autonomous systems.

Philip writes about the intersection of security, trust, and technology adoption — translating complex frameworks into actionable strategies for CISOs, architects, and business leaders who want to innovate without compromise.

Connect with him on LinkedIn or follow his insights at PhilipWalley.com for the latest perspectives on AI, security, and the evolution of digital trust.

Comments ()