Key Takeaways

- Zero trust security model provides essential protection against unique AI threats, including adversarial attacks, data poisoning, and model theft that traditional security approaches cannot adequately address.

- AI architectures with multiple models require granular access controls, continuous monitoring, and microsegmentation to secure data flows between components like training pipelines, inference engines, and model repositories.

- Organizations implementing zero trust for AI systems report an 83% reduction in security incidents while enabling secure deployment of AI agents and Large Language Models (LLMs) in enterprise environments.

- Modern AI security frameworks must evolve beyond traditional perimeter-based approaches to handle autonomous AI agents operating at machine speed across cloud, edge, and IoT infrastructure.s

- Successful zero trust AI implementation requires coordinated efforts between security teams, AI developers, and business stakeholders to balance innovation with robust threat protection.

The rapid adoption of artificial intelligence across enterprise environments has fundamentally transformed how organizations approach cybersecurity. As AI continues to revolutionize business operations, the attack surface has expanded dramatically, creating unique security challenges that traditional perimeter-based defenses cannot adequately address. With 94% of organizations experiencing identity-related breaches and AI systems processing vast amounts of sensitive data, the need for comprehensive security frameworks has never been more critical.

Zero trust security represents a paradigmatic shift from legacy security models, operating on the principle that no entity—whether user, device, or ai system—should receive implicit trust regardless of its network location. This approach proves particularly vital for AI implementations, where autonomous ai agents and sophisticated ai models require continuous verification and strict access controls to prevent data leaks, model theft, and adversarial attacks.

Modern enterprises implementing AI-powered solutions must navigate an increasingly complex threat landscape where malicious actors leverage AI to enhance traditional attack vectors while simultaneously targeting AI systems themselves. The convergence of zero trust principles with AI security creates a robust framework capable of protecting sensitive information, customer data, and proprietary AI models while enabling innovation and operational efficiency.

Understanding AI Security Challenges in Modern Architecture

The integration of AI systems into organizational infrastructures introduces a range of sophisticated security threats that extend far beyond traditional cybersecurity concerns. These emerging threats require specialized understanding and targeted mitigation strategies that conventional security approaches cannot provide.

Adversarial attacks represent one of the most insidious AI threats, where malicious actors manipulate input data to cause AI systems to produce unauthorized outputs without triggering traditional monitoring tools. These attacks exploit the mathematical foundations of machine learning models, injecting carefully crafted inputs that appear benign to human observers but cause AI models to misclassify data or make incorrect decisions. Unlike conventional cyberattacks that target system vulnerabilities, adversarial attacks exploit the inherent characteristics of artificial intelligence itself.

Data poisoning vulnerabilities emerge during the training phase of AI models, where attackers inject corrupted or malicious training data to compromise model reliability and decision-making accuracy. This attack vector proves particularly dangerous because the effects may not manifest until the compromised AI system reaches production environments, potentially affecting thousands of decisions before detection. The distributed nature of modern training data pipelines creates multiple injection points where malicious actors can introduce poisoned datasets.

Model theft and inversion attacks target the intellectual property embedded within proprietary AI models, enabling unauthorized parties to extract sensitive algorithms or reconstruct training datasets. These attacks can occur through API interactions, where attackers submit carefully crafted queries to reverse-engineer model parameters, or through direct access to model files in compromised systems. The theft of AI models represents not only a loss of competitive advantage but also potential exposure of sensitive information used in model training.

AI-powered cyber threats leverage machine learning to automate and enhance traditional attack vectors, creating more sophisticated phishing campaigns, social engineering attacks, and penetration testing tools. These AI threats can adapt in real-time to defensive measures, making them particularly challenging for security teams to counter. The automation capabilities of artificial intelligence enable attackers to scale their operations dramatically while reducing the human resources required for successful breaches.

The black-box problem in AI decision-making creates transparency challenges for security teams attempting to detect anomalous behavior or investigate security incidents. Many AI models, particularly deep learning systems, operate as opaque decision-making entities, with their reasoning processes hidden from human observers. This opacity complicates incident response efforts and makes it difficult to determine whether unusual AI system behavior indicates a security compromise or represents normal operational variation.

Organizations deploying AI systems must also contend with the expanding attack surface created by distributed AI architectures. Modern AI implementations often span multiple cloud providers, edge computing resources, and on-premises infrastructure, creating numerous potential entry points for attackers. Each component in the AI system architecture—from data ingestion pipelines to model serving endpoints—represents a possible vulnerability that requires careful security consideration.

Zero Trust Fundamentals for AI Systems

The application of zero-trust principles to AI systems requires a fundamental rethinking of how organizations approach security for AI implementations. Unlike traditional security models that establish trusted network perimeters, a zero-trust architecture serves as a comprehensive security framework that assumes no implicit trust—threats exist both inside and outside organizational boundaries, requiring continuous verification for every access request and interaction.

The core principle of “never trust, always verify” becomes particularly critical when applied to AI system components that operate autonomously and make decisions without direct human oversight. Every AI agent, model inference request, and data transaction must undergo explicit authentication and authorization, regardless of its origin or previous trust status. This approach recognizes that AI systems can be compromised through subtle manipulations that may not trigger traditional security alerts.

Continuous authentication and authorization for AI access represents a departure from static permission models toward dynamic, risk-based access control systems. AI systems must continuously verify their identity and authorization throughout their operational lifecycle, not just during initial deployment. This ongoing verification process considers factors such as current threat levels, recent system behavior, and the sensitivity of requested resources when making access decisions. A robust trust architecture is essential here, as it ensures trust is continuously verified through layered security measures, access controls, and ongoing monitoring.

Comprehensive visibility and monitoring of AI workflows enable security teams to detect suspicious activities and unauthorized access attempts in real-time. This visibility extends beyond traditional network monitoring to include specific metrics such as model prediction confidence levels, input data quality scores, and behavioral deviation indicators. Security practitioners must understand the standard behavior patterns of AI systems to identify anomalies that may indicate security incidents.

Least privilege access controls limit AI system permissions to only what is necessary for specific tasks and roles, reducing the potential impact of compromised AI agents or models. This principle requires careful analysis of AI system requirements to determine the minimal necessary permissions while maintaining operational functionality. Implementing least privilege for AI systems often involves dynamically adjusting permissions based on current tasks and risk assessments.

Dynamic policy enforcement adapts security measures based on real-time risk assessments and behavioral analytics, enabling zero-trust architectures to respond automatically to changing threat conditions. A well-defined trust policy is required to guide access controls, authentication protocols, and security procedures, ensuring comprehensive protection for AI systems. These policies must account for the unique characteristics of AI systems, including their autonomous operation, high-speed decision-making, and complex interdependencies with other system components.

The zero trust model recognizes that many organizations initially apply zero trust principles to human users before extending them to AI systems and other non-person entities. However, the autonomous nature of artificial intelligence creates scenarios in which traditional identity frameworks must adapt to address new security considerations specific to machine-to-machine interactions and autonomous decision-making.

AI Architecture Components and Security Considerations

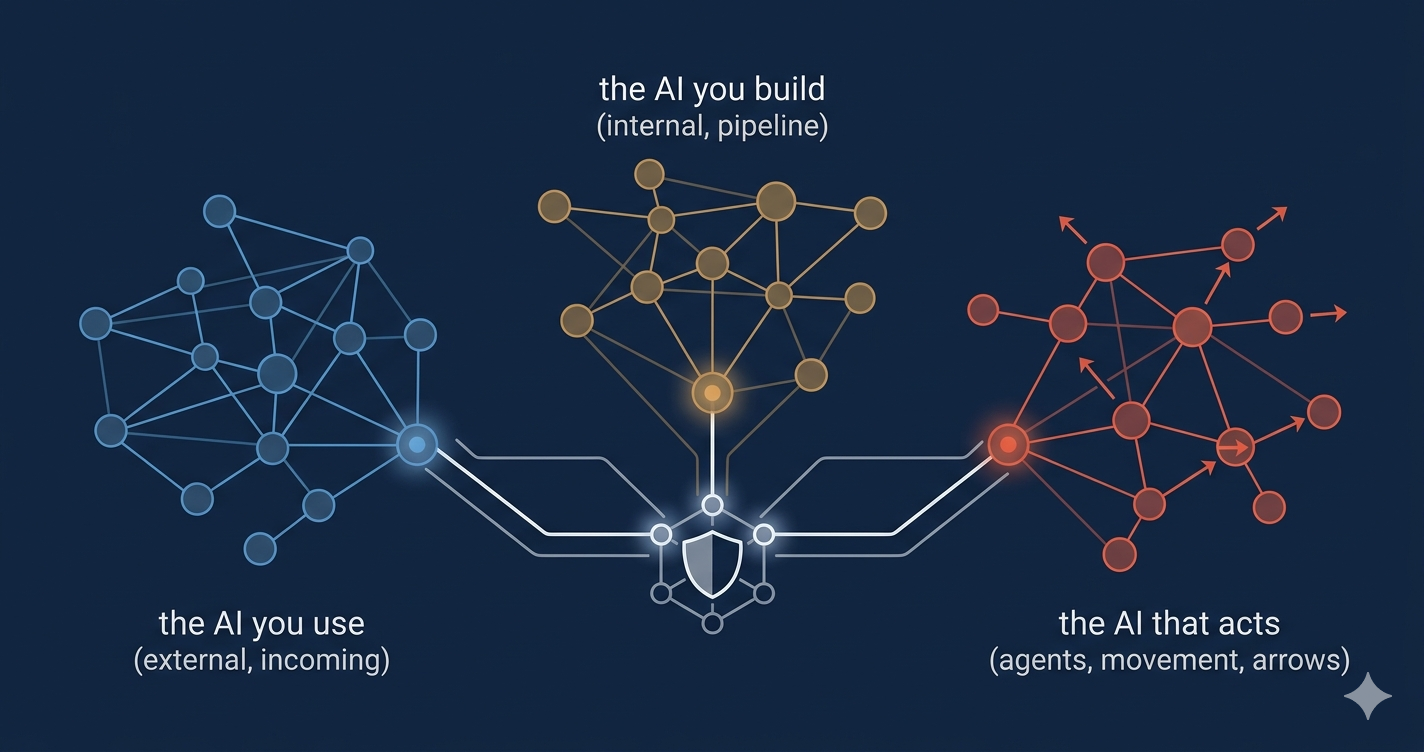

Modern AI architectures comprise multiple interconnected components, each presenting unique security challenges that require specialized protection strategies within a zero-trust framework. Understanding these components and their security implications enables organizations to implement comprehensive protection measures that address the full spectrum of AI-related risks.

Data ingestion layers serve as the entry point for information flowing into AI systems and require robust security measures to prevent contaminated input from reaching training or inference systems. These layers must implement encrypted pipelines that protect data in transit while providing validation mechanisms to verify data integrity and authenticity. Input validation becomes particularly critical for AI systems, as malicious data injection can compromise model performance or enable adversarial attacks that may not be detected until significant damage occurs.

Model training environments demand isolated compute resources with comprehensive audit trails for all training activities to protect against data poisoning and unauthorized model modifications. These environments process vast amounts of sensitive data and proprietary algorithms, making them high-value targets for malicious actors seeking to steal intellectual property or compromise model integrity. Secure model versioning systems enable organizations to track changes to AI models over time, facilitating rollback capabilities when security incidents occur.

Inference engines require real-time security inspection capabilities that operate without compromising performance requirements for production AI applications. Effective security solutions for inference engines must balance real-time enforcement with providing deep inspection and enforcement capabilities to handle the scale and complexity of AI agents and their communications. These components serve live traffic and must maintain low latency while implementing security controls such as input validation, output filtering, and behavioral monitoring. The challenge lies in implementing security measures that scale with production workloads while maintaining the responsiveness that users expect from AI-powered applications.

Model repositories require comprehensive access controls, encryption at rest, and integrity verification to protect intellectual property and prevent unauthorized access to models. These repositories store the core assets of AI implementations—the trained models that represent significant organizational investment in data collection, algorithm development, and computational resources. Version control systems for model repositories must track not only model changes but also associated metadata, training data provenance, and security audit information.

API gateways and integration points require robust authentication, rate limiting, and input validation to secure external connections while enabling necessary integration with other organizational systems. These components are often the most exposed elements of AI architectures, as they provide programmatic access to AI capabilities across internal and external systems. Proper API security includes not only authentication and authorization but also comprehensive logging and monitoring of API usage patterns to detect potential abuse or compromise.

The interconnected nature of these components creates complex data flows that must be secured end-to-end while maintaining the performance characteristics required for effective AI operations. Each component interaction represents a potential security boundary that requires careful consideration of trust relationships, data sensitivity, and access control requirements.

Implementing Zero Trust in Multi-Model AI Environments

Implementing zero trust in multi-model environments requires carefully orchestrating security policies across different AI components while maintaining the flexibility needed for rapid AI development and deployment. Organizations must balance security requirements with operational efficiency to avoid creating barriers that impede legitimate AI innovation and development activities. Adopting zero-trust policies is crucial for reducing security incidents and enhancing operational efficiency in multi-model AI environments.

Protecting AI Agents and Agentic Networks

The emergence of autonomous AI agents operating within organizational environments introduces novel security challenges that extend beyond traditional zero-trust considerations. These AI agents possess varying levels of autonomy and decision-making authority, requiring specialized security frameworks that can adapt to the unique characteristics of autonomous artificial intelligence systems.

Dynamic onboarding processes for AI agents must assign appropriate privileges and roles while maintaining human oversight and approval workflows throughout the agent lifecycle. The end user plays a dual role in these security workflows: they can both onboard agents and act as their manager, overseeing their activities and privileges. Unlike traditional users who undergo a one-time onboarding process, AI agents may require frequent role adjustments as operational requirements change and trust levels evolve. These processes must account for the autonomous nature of AI agents while ensuring that privilege assignment remains aligned with organizational security policies and risk tolerance.

Agent-specific authentication and authorization mechanisms go beyond inherited user privileges to account for autonomous operation capabilities and the unique identity characteristics of artificial intelligence entities. AI agents operating on behalf of human users must maintain clear boundaries between inherited permissions and agent-specific capabilities to prevent privilege escalation and unauthorized access. These mechanisms must support both human-delegated authority and autonomous agent capabilities while maintaining comprehensive audit trails for all agent actions.

Software-controlled tagging and labeling systems enable scalable macro- and micro-segmentation policies by automating the categorization of AI agents based on their roles, capabilities, and trust levels. These systems must adapt to the dynamic nature of agent networks, where new agents can be rapidly deployed and existing agents can change roles or capabilities in response to operational requirements. Automated tagging reduces the administrative burden of managing large numbers of AI agents while ensuring that security policies remain properly applied.

Semantic inspection capabilities using lightweight AI models analyze agent communications and detect malicious or anomalous behavior in real time without significantly impacting network performance. These inspection systems must understand the context and intent of agent communications to distinguish between legitimate autonomous behavior and potential security threats. The challenge lies in implementing inspection capabilities that can operate at machine speed while providing meaningful security insights for human security teams.

Dynamic role adjustment and revocation systems can expand, restrict, or revoke agent privileges based on behavioral analysis and security events, enabling rapid responses to potential compromises or policy violations. These systems must balance the operational needs of autonomous AI agents with security requirements, providing mechanisms for automated responses and human override. Role adjustment systems must consider the potential impact of privilege changes on ongoing AI operations and provide appropriate notification and escalation procedures.

Protecting AI agents requires understanding their operational patterns, communication requirements, and potential security implications. Organizations must develop specialized policies and procedures for managing AI agents while ensuring that autonomous capabilities do not create unacceptable security risks or bypass established security controls.

Best Practices for AI Security Zero Trust Implementation

Successful implementation of zero-trust security for AI systems requires adherence to established best practices that address the unique challenges of AI security while maintaining operational effectiveness. These practices provide actionable guidance for organizations seeking to balance AI innovation with comprehensive security protection.

Multi-factor authentication (MFA) requirements for all human users accessing AI systems provide essential protection against credential compromise and unauthorized access. These requirements must extend beyond simple password protection to include biometric verification, hardware tokens, or other strong authentication factors that significantly increase the difficulty of unauthorized access. For automated processes and system-to-system interactions, certificate-based authentication provides equivalent security through cryptographic validation of system identity.

End-to-end encryption for all AI data flows —including training data, model parameters, and inference results —protects sensitive information in transit and at rest throughout the AI system lifecycle. Encryption strategies for AI systems must account for the high-volume data processing requirements while maintaining performance characteristics necessary for operational effectiveness. Key management systems for AI environments must support automated key rotation and distribution while providing comprehensive audit trails for cryptographic operations.

Regular security assessments and penetration testing explicitly designed for AI systems identify vulnerabilities unique to machine learning environments that traditional security testing approaches may miss. These assessments must include evaluations of adversarial attack resistance, data poisoning vulnerabilities, and model extraction risks specific to artificial intelligence implementations. Security testing for AI systems requires specialized expertise in both cybersecurity and machine learning to identify and remediate AI-specific vulnerabilities effectively.

Incident response procedures tailored for AI security events provide structured approaches for addressing model compromise, detecting data poisoning, and mitigating adversarial attacks. These procedures must account for the unique characteristics of AI security incidents, including the potential for subtle manipulation that may not be immediately apparent and the complex interdependencies between AI system components. Response procedures must include provisions for model isolation, training data verification, and systematic assessment of AI system integrity following security incidents.

Security awareness training for AI development teams covers threat vectors specific to machine learning and responsible AI deployment practices that minimize security risks. This training must address both technical security considerations and the ethical implications of AI system compromise, helping development teams understand their role in maintaining comprehensive security postures. Security awareness programs should also emphasize the importance of protecting prompts and data in AI tools and companions, such as Microsoft Copilots, by applying Zero Trust principles to mitigate risks associated with AI interactions. Training programs must be regularly updated to address emerging threats and evolving best practices in AI security.

Implementing these best practices requires coordination among security teams, AI development personnel, and organizational leadership to ensure that security measures support rather than impede legitimate AI innovation and deployment. Organizations must establish clear policies and procedures that guide AI security decision-making while maintaining flexibility to adapt to evolving AI technologies and threat landscapes.

Real-World Applications and Case Studies

The practical implementation of zero-trust security for AI systems delivers significant benefits across diverse industry sectors, with organizations reporting substantial improvements in security posture while maintaining operational efficiency and innovation. These real-world applications provide valuable insights into successful zero-trust AI implementations. Most enterprises rely on Microsoft Entra ID and Microsoft 365 as foundational components of their Zero Trust security strategies, highlighting the widespread adoption of these solutions in modern cybersecurity frameworks.

Financial institutions implementing zero trust to protect customer data in AI-powered fraud detection systems demonstrate how comprehensive security frameworks can enhance both security and operational effectiveness. These organizations typically process vast amounts of sensitive financial information using AI models that must operate in real time to identify fraudulent transactions. Zero trust implementations enable granular access controls that protect customer data while maintaining the low-latency performance required for effective fraud detection. Regulatory compliance requirements in financial services drive the need for sophisticated audit trails and monitoring capabilities that provide comprehensive visibility into AI system operations.

Healthcare organizations securing AI diagnostic tools with granular access controls illustrate the critical importance of protecting patient information while enabling medical AI innovations. These implementations must comply with stringent healthcare privacy regulations while providing medical professionals with timely access to AI-powered diagnostic insights. Zero-trust architectures allow healthcare organizations to protect proprietary AI models and sensitive patient data while supporting collaborative medical research and treatment planning.

Technology companies implementing zero trust for Large Language Model deployments, including enterprise AI assistants and automated customer service systems, showcase the scalability of zero trust approaches for high-volume AI applications. These deployments must handle millions of user interactions while protecting sensitive information and preventing unauthorized access to proprietary AI models. Zero-trust implementations enable these organizations to provide AI-powered services to end users while maintaining strict access controls and comprehensive monitoring of AI system behaviors.

Manufacturing enterprises that protect proprietary AI models for predictive maintenance and quality control demonstrate how zero trust enables secure data sharing with partners while preserving competitive advantage. These organizations typically deploy AI systems across complex supply chains involving multiple external systems and partner organizations. Zero-trust architectures enable secure integration with external systems while maintaining control over sensitive manufacturing data and proprietary AI algorithms.

Government agencies securing AI systems for national security applications implement enhanced monitoring and strict access controls for classified data processing, showcasing the most stringent security requirements for AI implementations. These deployments must meet the highest security standards while enabling AI capabilities that support critical government functions. Zero-trust implementations provide the comprehensive security controls needed to protect classified information while enabling AI innovation in government applications.

Across these diverse applications, organizations consistently report significant improvements in security posture, with many achieving the 83% reduction in security incidents cited in industry studies. These improvements result from comprehensive visibility, continuous verification, and dynamic access controls that characterize practical zero-trust implementations for AI systems.

Future of AI Security and Zero Trust Evolution

The convergence of artificial intelligence and zero-trust security continues to evolve rapidly, with emerging technologies and changing threat landscapes driving innovation in security architectures and implementation approaches. Understanding these future directions enables organizations to make informed decisions about long-term AI security strategies.

AI-powered threat detection systems integrated directly into zero-trust architectures promise faster identification and response to sophisticated attacks by leveraging machine learning to analyze security events and identify patterns indicative of emerging threats. These systems can process vast amounts of security telemetry in real-time, identifying subtle attack indicators that might escape traditional rule-based detection systems. The integration of artificial intelligence into security monitoring creates feedback loops that make security systems more effective over time through continuous learning from attack patterns and defensive responses.

Automated policy adjustment capabilities that leverage machine learning to optimize security controls in response to evolving threat landscapes and system behaviors represent the next evolution in adaptive security frameworks. These capabilities enable zero-trust architectures to respond automatically to changing risk conditions, adjusting access controls and security policies without manual intervention. The challenge lies in ensuring that automated adjustments remain aligned with organizational risk tolerance and operational requirements while providing appropriate oversight and control mechanisms.

Enhanced transparency and explainability tools address AI threats related to the black box problem while maintaining strong security postures by providing security teams with insights into AI decision-making processes. These tools enable a better understanding of why AI systems make particular decisions, facilitating more effective incident investigation and security policy development. Explainable AI for security applications must balance transparency with the protection of sensitive security algorithms and techniques.

Expanded zero trust frameworks for Internet of Things (IoT) and edge AI deployments address scenarios where traditional perimeter security proves ineffective due to distributed architectures and resource constraints. These frameworks must accommodate the unique characteristics of edge computing environments, including limited computational resources, intermittent connectivity, and diverse device capabilities. Zero trust implementations for edge AI must provide security controls that scale from powerful edge servers to resource-constrained IoT devices.

Integration of quantum-resistant cryptography and post-quantum security measures protects ai systems against future computational threats that may emerge as quantum computing technologies mature. Organizations must begin planning for the eventual deployment of quantum computers that could compromise current cryptographic algorithms, ensuring that AI security architectures remain effective against future technological developments. Post-quantum security planning for ai systems must consider the long operational lifecycles of AI models and the potential for retroactive attacks on historical data.

The future evolution of AI security and zero trust will likely be characterized by increased automation, improved threat detection capabilities, and enhanced integration between security tools and AI development platforms. Organizations must balance the benefits of these advancing capabilities with the need to maintain human oversight and control over critical security decisions.

As AI continues to transform business operations and decision-making processes, the security frameworks protecting these systems must evolve to address new threats while enabling continued innovation. The integration of zero-trust principles with advancing AI technologies provides a foundation for comprehensive security that adapts to changing requirements while maintaining the fundamental principles of continuous verification and minimal trust.

Organizations implementing AI security zero trust frameworks today position themselves to take advantage of future developments while building robust security foundations that protect against both current and emerging threats. The investment in comprehensive zero trust architectures for ai systems provides long-term security benefits that support organizational growth and innovation in an increasingly AI-driven business environment.

FAQ

How does zero trust differ from traditional security approaches when protecting AI systems?

Zero trust eliminates the concept of trusted internal networks. It requires verification for every access request, a requirement that is crucial for AI systems that process sensitive data and make autonomous decisions. Traditional perimeter-based security cannot adequately protect against AI-specific threats, such as model extraction and adversarial attacks, that originate within the network. Unlike legacy approaches that grant broad access based on network location, zero trust implements continuous verification and granular access controls tailored to the unique characteristics of artificial intelligence systems.

What are the performance implications of implementing zero-trust security in AI environments?

Modern zero-trust implementations use lightweight inspection models and optimized encryption, adding minimal latency to AI operations. The key lies in implementing semantic inspection and behavioral analytics that operate at machine speed without disrupting real-time inference requirements or training pipeline performance. Organizations typically report less than 5% performance overhead when properly implementing zero-trust controls, with the security benefits far outweighing the minor performance impacts.

How should organizations implement zero trust for third-party AI services and cloud-based models?

Organizations should treat external AI services as untrusted by default, implementing additional encryption layers, data tokenization, and contractual security requirements. This includes monitoring data flows to cloud AI services, implementing secure API gateways, and ensuring compliance with data residency requirements. Zero-trust principles require that even trusted cloud providers undergo continuous verification and that sensitive data receive appropriate protection regardless of its processing location.

What role does governance play in zero-trust AI security strategies?

Governance frameworks must define clear policies for AI model deployment, data access, and security controls while enabling innovation. This includes establishing approval workflows for new AI projects, determining risk tolerance levels, and creating accountability structures for AI security incidents and compliance violations. Effective governance ensures that zero trust implementations align with business objectives while maintaining appropriate security controls and regulatory compliance.

How can organizations measure the effectiveness of their zero-trust AI security implementation?

Key metrics include reduction in security incidents, mean time to detection and response for AI-specific threats, compliance audit results, and user productivity measures. Organizations should also track AI model performance to ensure security controls don’t negatively impact business outcomes and innovation capabilities. Regular security assessments, penetration testing results, and incident response metrics provide a comprehensive evaluation of the effectiveness of zero trust for AI systems.

Protecting Against Data Leakage

Protecting against data leakage is a critical aspect of implementing a Zero Trust security architecture. Data leakage occurs when sensitive data is accessed, transmitted, or stored in an unauthorized manner, posing significant security risks to organizations. To mitigate these risks, it is essential to implement strict access controls, continuous monitoring, and Zero Trust principles to ensure that only authorized users and systems can access sensitive data.

One of the primary challenges in protecting against data leakage is the complexity of modern networks and the vast amounts of data being transmitted. AI systems and AI models can exacerbate this challenge by introducing new vulnerabilities and attack surfaces. However, AI-powered security tools can also provide deep insights into network traffic and user behavior, enabling security practitioners to detect anomalies and respond to emerging threats.

To protect against data leakage, organizations should implement a Zero Trust security model that assumes all users and systems are potential threats. This model requires continuous verification of user identities, access rights, and system behavior to ensure that only authorized access is granted. Role-based access controls, multi-factor authentication, and least-privilege principles should be implemented to limit access to sensitive data and prevent unauthorized users from accessing or transmitting it.

In addition to these measures, organizations should also implement security policies and procedures to prevent data poisoning and model theft. Data poisoning occurs when malicious actors inject false or misleading data into AI models, while model theft involves stealing or recreating proprietary AI models from their outputs. To prevent these threats, organizations should implement robust data protection measures, such as encryption and secure key management, and ensure that all data is handled and transmitted securely.

Furthermore, organizations should continuously monitor their networks and systems for suspicious activities and implement incident response plans to respond quickly and effectively to security incidents. Behavioral analytics and AI-powered security tools can provide valuable insights into user behavior and network traffic, enabling security teams to detect and respond to emerging threats.

In conclusion, protecting against data leakage requires a comprehensive Zero Trust security architecture that implements strict access controls, continuous monitoring, and AI-powered security tools. By assuming all users and systems are potential threats and implementing robust security measures, organizations can mitigate the risks of data leakage and ensure the security and integrity of their sensitive data. As AI continues to evolve and play a critical role in modern networks, it is essential to prioritize Zero Trust security policies and procedures to safeguard operations and prevent security threats.